2025

Apr

08

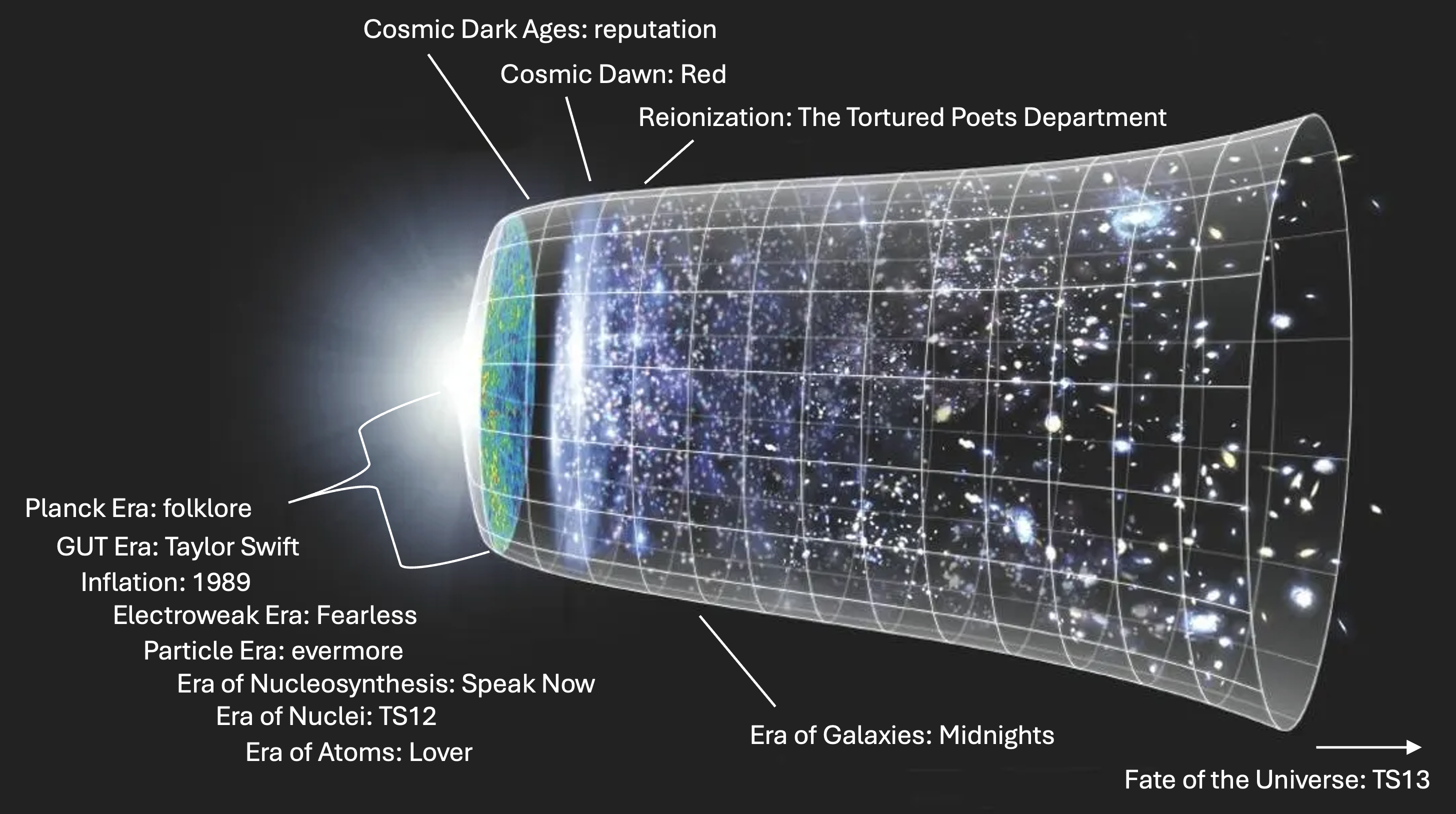

arXiv Fool's Day 2025 (Taylor's Version)

Academia, you have outdone yourselves. This year I’m adding 21 new entries to my “asymptotically comprehensive” list of April 1 arXiv joke papers, just edging out last year’s record of 20....